The Limits of Optimization: Technology, Nihilism, and Giving a Damn, Part II

The Emptiness of Optimization and Automation

Note: This is a sequel to my post from September 19: The Limits of Autonomy: Technology, Nihilism, and Giving a Damn, Part I. The sequel to this sequel is: The Limits of Care: Technology, Nihilism, and Giving a Damn, Part III. The series continues into Part IV: Punk as a Response to Nihilism.

The Emptiness of Optimization and Automation

The vision of the major AI companies today is clear: automate as much human work as possible, and compete with each other so that their systems can be the ones involved in the intimate details and choices of your everyday life, as your constant advisors and even artificial friends.

Tristan Harris gave a jarring, if perhaps over-exaggerated, run-through of this situation in his recent interview with Jon Stewart.

Any prognostications about AI soon taking over all human work, including skilled, embodied, manual work (and not just work done from behind a computer) are likely overblown for reasons we discussed last time. Even so, a recent Stanford study finds entry-level jobs susceptible to AI-automation have already declined by as much as 13%.

Meanwhile, two major AI companies just released apps (Vibes and Sora) enabling users to churn out feeds of flabbergasting, fake, nonsense content. The apps are designed as social media to capture our attention and further habituate us to constant use of these AI technologies.

As I discussed in the prequel post, much alarm around all of this focuses on threats to individual autonomy: won’t offloading decisions, work, and attention to AI compromise our powers of independent judgment? Won’t we become mindless sheep?

Autonomy matters. But a focus on individual autonomy is too narrow.

AI intensifies pressures already ever-present in our technological modernity: to treat everything in life as a problem to solve, to maximize efficiency, to optimize and get the most output for the least input.

In this context, young people are speaking openly of their nihilism, of the queasy sense that nothing is worth doing, that life has become an exhausting grind toward no clear meaningful end. Now AI arrives offering to take over, exacerbating the emptiness it promises to fill.

A Deeper Diagnosis of the Nihilism of Our Times

The prequel to this post, “The Limits of Autonomy: Technology, Nihilism, and Giving a Damn, Part I,” began to explore this rising tide of nihilism among young people today.

The mood of nihilism, so strikingly articulated by voices such as Kyla Scanlon and Wendy Syfret, involves a diminished feeling of involvement in the world; an impression of being a baffled spectator to a way of life that doesn’t make sense anymore; a queasy intuition that there is ultimately no point to life, nothing worth doing or committing oneself to in the world today.

Last time, I glossed nihilism as the “atrophy of our capacities to care”

On top of a generalized crisis-fatigue (financial crises, climate disasters, political crises, not to mention the so-called “metacrisis” and “polycrisis”), disoriented young people grate against our contemporary culture of compulsive optimization and competitive status-seeking (see, e.g., David Brooks’s account of this grueling and joyless rat race among elite college students in his piece, “The Most Rejected Generation.”).

Life hits like a series of optimization problems to solve, hurdles to clear, and ladders to climb; fragmented by social media, increasingly mediated by AI, and disembedded from concrete gathering places, face to face friendships, local community, and our vulnerable ecological abode.

These patterns fit and extend the premonitions about living in a technological age that Martin Heidegger offered in his essay “The Question Concerning Technology” (from the late 1940s-early 1950s).

Listen to Wendy Syfret’s description of the compulsory, “endless optimization,” she found herself engulfed by:

“I’m honestly not sure what I’m trying to achieve when I break my Sundays into thirty-minute increments, or adorn myself with tech that threatens my personal privacy in pursuit of invasive data, but the sense of control and power this way of living offers is comforting. Approaching my sparse leisure time with the same steely commitment I apply to work makes me feel like I’m using my life right by not wasting a moment.”

(The Sunny Nihilist, by Wendy Syfret, p. 40)

The fact that these bleak considerations are seemingly felt more acutely by younger generations does not invalidate them. On the contrary. Zooming out, Scanlon’s and Syfret’s observations help us see that nihilism is not just a matter of individual attitudes.

Nihilism is a historical condition in which people, under pressures of “endless optimization,” lose touch with the possibility of caring, of living their lives tending to meaningful projects, relationships, or purposes beyond themselves that matter for their own sake, rather than because they gets us some further thing or open up further options.

Syfret thus gives voice to what Heidegger-scholar Iain Thomson calls the optimization imperative: “that technologizing drive to ‘get the most for the least’ that demands effective action now.” See Thomson’s excellent recent, compact book, Heidegger on Technology’s Danger and Promise in the Age of AI:

Optimization and control are detrimental to care. (See my post, “Care at the Edge of Automation” for more on this point.)

Yet, caring and tending to what matters are indispensable for a life experienced as meaningful and worthwhile.

The influence of the optimization imperative has turned Millennials and Gen Z’ers into a self-described “burnout generation.” There is a standing pressure to live life as some kind of engineering problem, and a lingering emptiness that follows from mistaking control for meaning.

Life as an Engineering Problem to be Solved by AI?

The belief that life should be treated as an engineering problem, with an optimal solution and the promise of maximum control through technologies such as AI, recurs throughout the discourses popular in Silicon Valley.

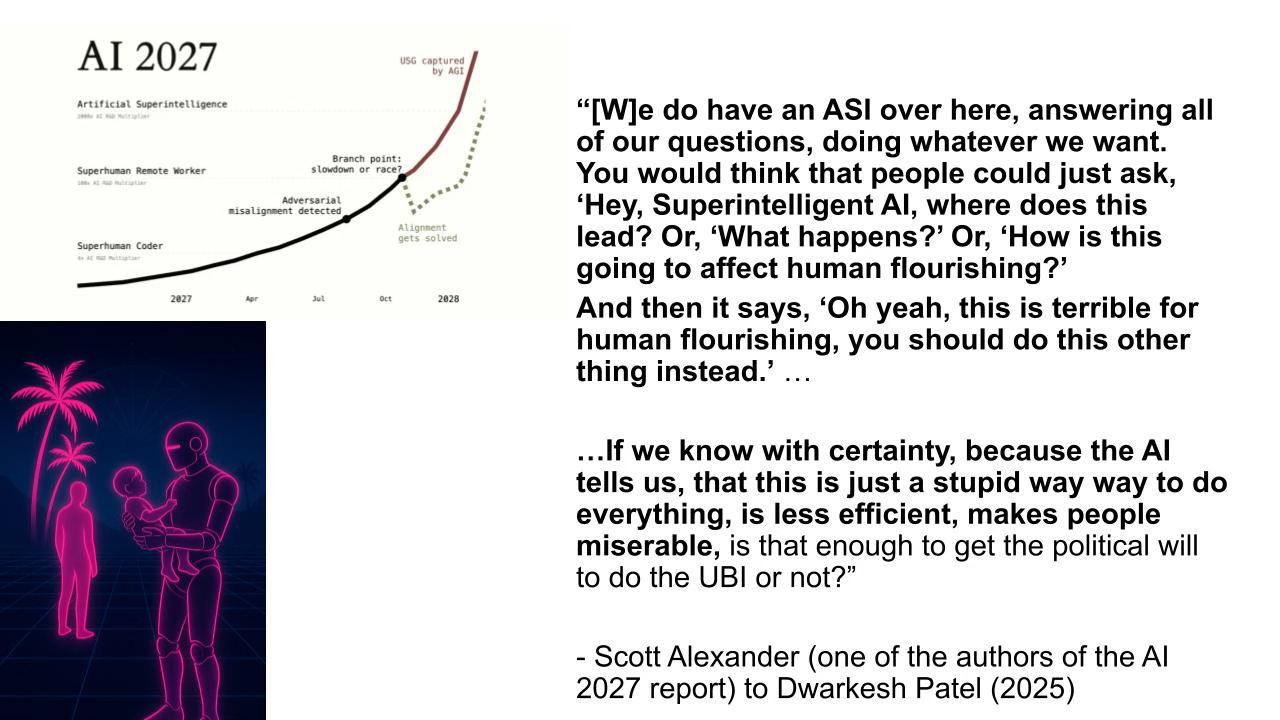

For example, we can see it in the following remarks from Scott Alexander, author of the rightly-celebrated Astral Codex Ten newsletter, and a co-author of the much-discussed AI 2027 report, in an interview from April of this year.

(Note, in the following quotation, “ASI” is an abbreviation for artificial super intelligence. The immediate context of the comment is a discussion about current political arguments around the plausibility of universal basic income (UBI) as a response to economic upheavals anticipated to emerge from AI. )

What is striking to me is how Alexander blithely assumes that the anticipated ASI will actually be able to answer “all of our questions” about “human flourishing” and do so “with certainty.”

Here is the link to the point in the interview where the relevant moment comes up. The graphic of a neon Robocop holding a baby just above is an image I co-generated with Chat-GPT. I made it in order to visualize the tensions around our technological temptations to offload our caring to machines and algorithms. I discuss this further in my talk “Who Cares About Values?” (from which this slide is taken)

Again my point in sharing this moment from the conversation with Scott Alexander here is to show how it partakes of and contributes to the cultural context in which it is just taken for granted that life and human flourishing can be treated as an engineering problem with an optimal solution, exactly the cultural context that feeds into the sense of nihilism experienced by Scanlon, Syfret, and Heidegger.

In these conditions of technological self-assertion, the receptive abilities and dispositions for articulating and taking care of what matters are endangered.

Moreover, Alexander’s eagerness to hand over the messy questions of human flourishing and life in common to an AI system brings us directly back to the main point at issue in the prequel post to this one: concerns about algorithmic dependence and the erosion of human autonomy that Brendan McCord, Jonathan Bi, and Cosmos Institute articulate as the defining peril of our technological age.

Care Beyond Optimization and Autonomy

But again, autonomy is only part of the picture. People can’t properly tend to what matters while trying to optimize and control it (this phrase comes from my collaboration with David Spivak of Topos Institute).

Raising a child, sustaining a loving relationship, articulating new social concerns that demand your attention, healing conflict in your cherished friendships, cultivating community with your neighbors, and identifying the traditions worthy of your dedication, for example, are not problems with definite optimal solutions, but ongoing projects to inhabit and navigate in uncertainty, conversation, and commitment.

Living a life of care demands a different orientation. It demands a revitalization of our capacity to give a damn: The receptivity to be claimed by concerns that call out as important, and the courage to sustain commitments despite uncertainty. The skills for taking care together, in conversation, as part of concrete communities dedicated to what matters. Iconoclastic questioning of optimization itself as the measure of life, and other taken-for-granted elements of today’s common sense. And radical hope: that non-despairing openness to futures we cannot yet clearly imagine.

These are the topics for next time…

Coda: A Companion Conversation on Nihilism

As a companion to this whole conversation, I’d like to recommend this relevant interview between Jonathan Bi of Cosmos and the University of Chicago philosopher Robert Pippin, one of my favorite living philosophers. Drawing from Nietzsche rather than Heidegger, Pippin strikingly explains a related perspective about how nihilism is a historical phenomenon (rather than a matter of individual attitudes) arising in connection with developments in Western culture (such as modern technology and consumer capitalism).

Dear B. A question I have been pondering: What is the causal or phenomenal relationship between cynicism and nihilism? You have talked about young people, celebrating nihilism. I am also watching many, beginning to be proud of their cynicism. I would love to hear your reactions and, if it is worthy of your attention, perhaps even a blog piece. With gratitude.

I think the "problem" is that many young people are beginning to take life as a problem to be solved. The joy and play in our engagements are disappearing, and people are not even asking what for. Humanity is headed for rapids, as you mentioned elsewhere in your posts. My goodness, my friend, what a timely and fantastic project you have taken on. As an entrepreneur and trusted guide to many entrepreneurs, I am loving this writing and pondering what the domain of my career is in the era of AI.