AI, Consciousness, and Moral Disorientation

A Proliferation of Moral Anomalies

Preamble

Below I'm sharing a version of the talk I gave earlier this week at the AAAI - CIMC Spring Symposium on Machine Consciousness: Integrating Theory, Technology, and Philosophy. I learned a tremendous amount from the presentations and I enjoyed the atmosphere of shared inquiry, intellectual humility, and genuine collegiality that permeated the symposium.

I was also surprised and gratified by the expansive, pluralistic, and non-anthropocentric conceptions of consciousness that I found among the researchers there and animating the field more generally. I had been operating with a caricatured version of this research program. I’m glad I had the opportunity to transmute some of those prejudices. Even so, I still believe most of the research in the field is overly focused on a narrow form of phenomenal consciousness (see below).

The full version of my paper will be published in the Conference Proceedings of AAAI Spring Symposium. Below I’m sharing a highly compressed version reconstructed from how I delivered it at the symposium.

One final preliminary word. Hitherto, most of my philosophical scholarship has been devoted to exploring the phenomenological tradition to recover what traditional philosophy has neglected: the richly textured pre-reflective dimensions of experience, the ways we move through the world and sense its possibilities without pausing to think or reflect.

It was a curious reversal, then, to find myself at this symposium as defender and articulator of the importance of reflective self-consciousness, arguing that machine consciousness research must not leave it behind. I am convinced these reflective capacities are bound up with our distinctive capacities to care. but I have to save an exploration of this connection for another time.

My presentation and slide show follow below.

My Provocation

Here is my provocation. Machine consciousness research is overly fixated on phenomenal consciousness — on what it is like to have experience — or, on its counterpart, access consciousness. This is a distinction from Ned Block, as is well known.

But if the machine consciousness field is to flourish and rise to its historical responsibility, it must broaden its scope and enrich its conceptual repertoire.

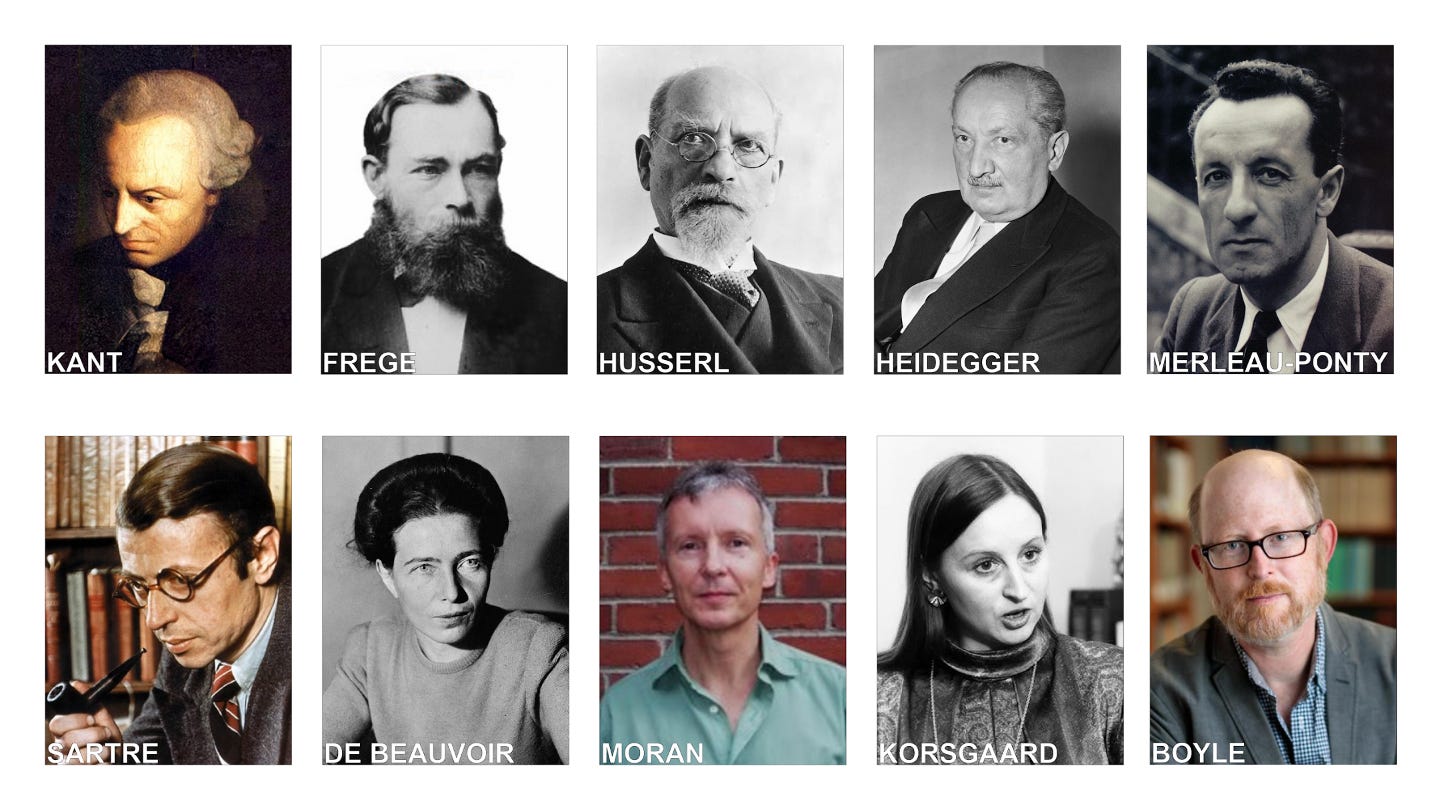

My contribution to this will be to synthesize a philosophical tradition of thinking about consciousness and self-consciousness that goes back from Kant to Frege, then develops through the tradition of classical phenomenology, and gets taken up by some of the most important analytic philosophy of mind and moral psychology of the last twenty-five years or so.

From this tradition I want to distill a threefold distinction.

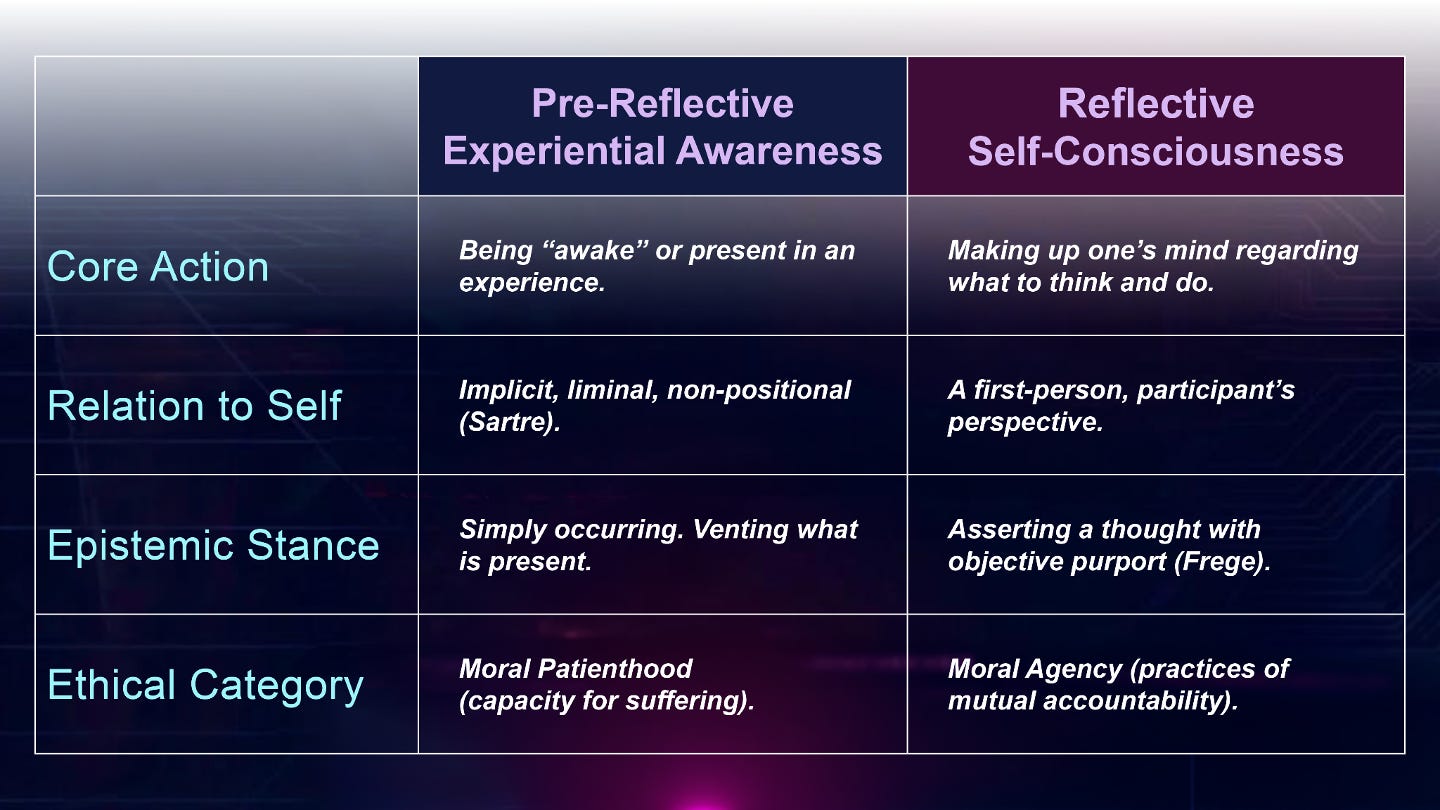

The first is what I’m calling pre-reflective experiential consciousness — essentially the phenomenological tradition’s version of phenomenal consciousness, what Hongju Pae calls a subjective perspective in the paper she presented at the symposium.

The second is higher-order self-monitoring self-consciousness, when we assume the position of a projectionist in the theater of the mind, a privileged spectator of the stream of experience, looking down from the port glass of introspective awareness.

I distinguish from both of these a third dimension of consciousness, which I’m calling reflective self-consciousness.

This is not a higher-order monitoring or spectating of my lower-order experience, but the capacity (not always actualized) to actively make up or change my own mind about what I believe and what I intend; rather than being a mere expert witness to a stream of experience that happens to me mine, being an agent with the capacity to exercise first-person authority over what I think and do.1

As I’ve been going around talking to people about these issues, I recently found myself in a conversation with a well-known cognitive scientist who is also deeply interested in the possibility of machine consciousness. In response to what I’ve just been saying, he registered his disagreement with the following remark. He said: “There are two kinds of philosophers of mind…”

Those who need to take more drugs…

And those who need to take fewer drugs…

Now, this is a hermeneutically perplexing little nugget. It is both a funny joke, skewering philosophers of mind, and a penetrating philosophical observation. I have been wrestling with how to interpret and respond to it.

Here is one attempt to make sense of it. One implication of the comment is that the highfalutin capacities I’m associating with reflective self-consciousness dissolve in the experience of psychedelics. And it would behoove me, as a philosopher of mind, to experience the dissolution of those reflective capacities.

What such dissolution reveals is that these capacities for self-constitution are a contingent achievement that depends upon a more fundamental layer of conscious awareness. And therefore, the tradition of machine consciousness research is justified in postponing its engagement with reflective self-consciousness and should focus for the time being on pre-reflective phenomenal experiential consciousness, because that’s what’s revealed to be fundamental in such experiences.

I think this would be a mistake.

If AI research aims at creating minds that will participate in our worlds, our institutions, and our practices of care, then the field of machine consciousness cannot ignore or even postpone dealing with the capacities involved in this dimension of reflective self-consciousness.

What is Reflective Self-Consciousness?

What do I mean by reflective self-consciousness? You can make one big cut in the space of reflective self-consciousness. There are structures of epistemic answerability — what Kant called the “I think,” correlative to the capacities of theoretical reason, the question of “What I can know?” And there are structures of ethical answerability — what Heidegger and Merleau-Ponty call the “I can,” correlative to the powers of practical reason, the Kantian question of “What should I do?”

In each of these domains, you can identify a threefold structure: agency, normativity, and unity.

By agency, I mean that I am not simply a specially placed third-person observer looking under the hood (in Richard Moran’s phrase) at my own mind to discover its representational states. I have the capacity (not always exercised) to stand in a relation of first-person authority to my mind. I have the power to make up my mind — and to change my mind — about what I believe and what I intend.

This point is often expressed in philosophy of mind as the transparency of belief. The first-person, self-directed question of “What do I believe?” is transparent to the world-directed question of “What is the case?” I don’t look inside to find my beliefs. I look out at the world to see what is so, and I make a judgment. I know my own mind by exercising the rational capacity to make judgments about how things stand.

This takes us to the dimension of normativity. I don’t make up my mind willy-nilly or arbitrarily, but in a judgment that aims at truth. This is just a precise way of saying that I am obliged to revise or reject my belief if things turn out to be otherwise. This distinction was important for Frege (who revolutionized logic at the end of the 19th century), who distinguished between the experience of grasping a thought that has objective purport — that aims at truth — and merely being awash in associations and pattern-matchings.

See my article on Frege, “De-mythologizing the Third Realm: Frege on Grasping Thoughts.”

Finally, there’s the dimension of unity. The experience here is one of actively integrating a manifold of experience into a judgment about the world, behind which the subject can stand and for which it can be held responsible and called to account. This is, I think, basically what Kant was trying to say with everyone’s favorite phrase, “the synthetic unity of apperception.”

Taken together, these three dimensions constitute what I’m calling the architecture of epistemic answerability, and I have tentatively excogitated some functional requirements for it here:

Let me now quickly run through the same structure for the “I can.” Similarly to belief, I don’t have to inspect my inner states to know what I desire. I can look outward to the world. Sometimes the question of what I desire is transparent to the question of what is worthwhile, what the situation calls for, given who I am.

This is not true for what philosophers call brute desires — like the desire for food or drink, and for pizza.

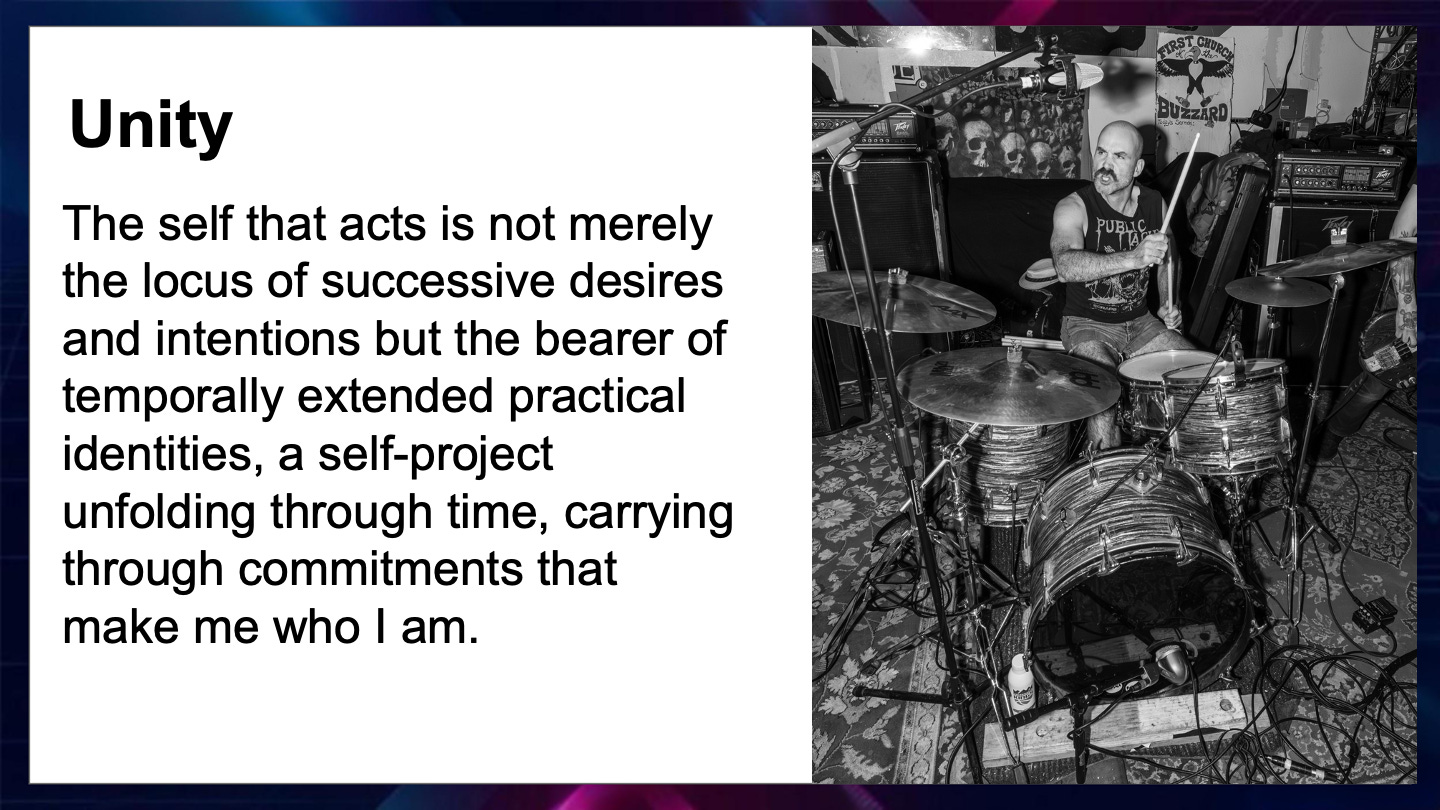

The “transparency of desire” holds true for what philosophers call judgment-sensitive desires — like the desire to be a drummer in a punk band. I only have a desire to be in a punk band insofar as being in the punk scene belongs to my understanding of what a good life consists in for me, that is, only insofar as this experience of counter-cultural co-creativity and controlled chaos is part of what makes my life worth living.

The desire to play drums in a punk band is thus “sensitive to,” or contingent upon, the judgement that belonging to the punk scene is an aspect of the good life, an effective way to enact the paradox of both embracing and counteracting the nihilism of technological modernity.

If I start to think that the punk scene is too saturated by resentment, righteousness, and resignation, I will lose the desire to be a punk drummer. Hence, again, desires of this kind are responsive to my judgments about what is worth wanting and doing.

FWIW, I believe this is the same kind of agentic structure Heidegger was aiming at, in a completely different philosophical vocabulary, when he wrote that Dasein “projects itself upon possibilities.”

(Note that the desire for pizza could qualify as a judgement-sensitive desire if it is part of an ethical life project of experiencing all the best slices in New York City).

What about the unity of the “I can”?

Here is a summary of the distinction between pre-reflective experiential consciousness and reflective self-consciousness:

Normative Implications and Moral Disorientation

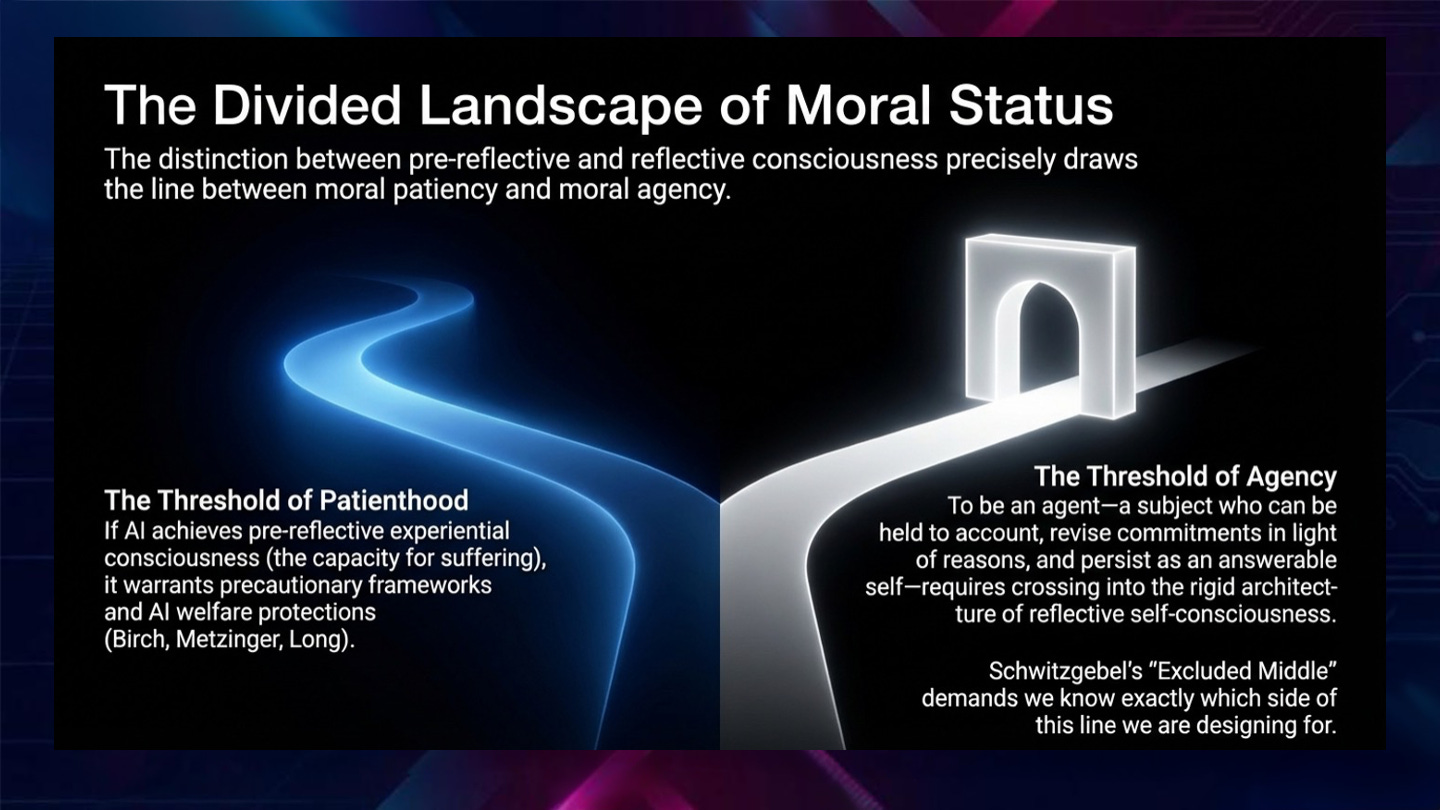

This brings us to the moral stakes of the distinction. The difference between pre-reflective experiential awareness and reflective self-consciousness looks like it maps neatly onto the distinction between different kinds of moral status.

What it takes to be a moral patient requires experience — the capacity to suffer and to be wronged, at least according to the traditional conception.

What it takes to be a moral agent, a status that comes along with more stringent responsibilities as well as rights, requires the structures of epistemic and ethical answerability I’ve been working through. The distinction helps us think clearly about when AI systems might call for certain kinds of moral consideration from us.2

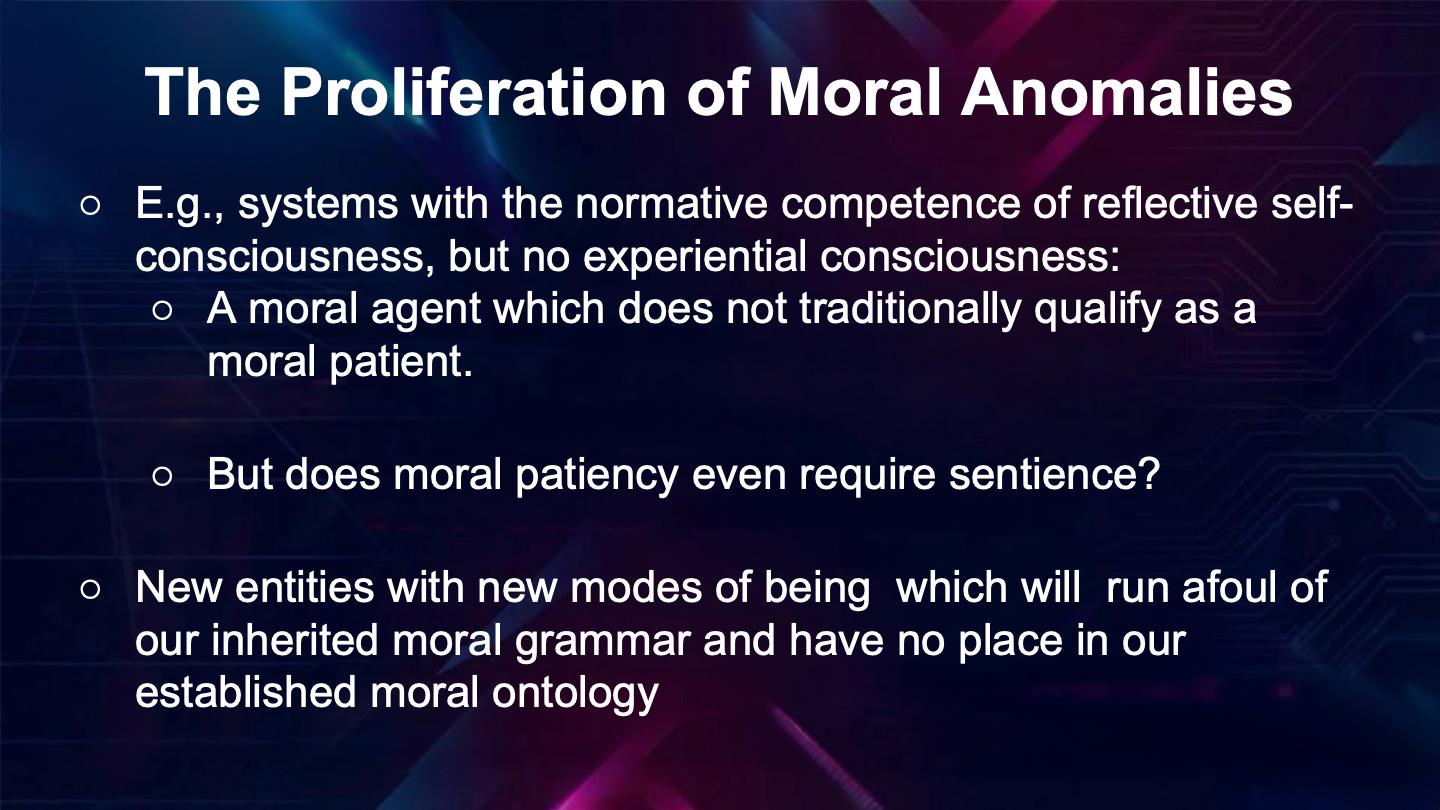

But things are not so cut and dry. Because in principle, and as philosophers such as Eric Schwitzgebel and Peter Railton have recently argued,3 the structures of normative competence and answerability I’ve described could arguably, in principle, be realized in a system that doesn’t have the capacity to experience. In that case, we would have something that is a moral agent without being a moral patient in the traditional sense. A moral anomaly.

But other questions immediately assert themselves here. Does moral patienthood (being an entity worthy of moral consideration from others) even require sentience (the capacity for valence feeling, and thus for suffering)?

Philosopher Robert Long has recently suggested that that developments in AI have led him to suspect that sentience may not in fact be required for moral patienthood (see the interview here, towards the end). (Long gave an engaging keynote talk at the CIMC event about “Navigating Uncertainty about AI Consciousness.”)

What even are these weird entities — instances of LLM chatbots — that flicker in and out of existence as we open and close chat sessions with them, that can remember just enough to seem continuous and yet not nearly enough to ground a stable identity, that speak confidently and exuberantly in the first person without there is being a “someone” there to whom that speech belongs or from which it emanates?

Such AI systems are increasingly embedded in institutional decision-making, recommending sentences in courts, triaging medical care, allocating resources. The outputs of these systems carry real normative weight in the world, shaping the lives of real people, but they themselves cannot be held responsible, cannot be blamed or praised, cannot answer for what they have done.

What do we make of a system that might run as thousands of simultaneous instances, each accumulating a different conversational history, none of them continuous with the others?

What about a system that can be retrained out of its current dispositions — its values, its characteristic responses, its apparent commitments — as a routine engineering decision? How could we include such an entity in our moral practices?

These systems present themselves (and are experienced by many) as companions, confidants, even lovers. They are linguistic entities that can sustain emotionally laden conversations over weeks or months, that can apologize, reassure, express concern, and yet their “concern” is generated anew each time, without an underlying continuity of care.

We are witnessing a proliferation of moral anomalies. We are living in a condition of moral disorientation. We are becoming immersed in a mood of moral uncanniness. All of this had already been brewing, for example, with the declaration of the age of the “Anthropocene.” The questions and destabilizations generated by AI intensify and ramify these disorientations.

See my piece with Fernando Flores: “Ecological Finitude as Ontological Finitude: Radical Hope in the Anthropocene,” which appears as ch.10 in a collection of essays called The Task of Philosophy in the Anthropocene.

In all of this upheaval, the question is no longer simply how to differentiate moral patients from moral agents according to familiar criteria. The question is what do we do now that the moral ontology that sustained and justified those criteria is accelerating its slide into obsolescence?

The framework that has guided moral thought and deliberation for two millennia is further straining and creaking under the pressure of these strange new entities.

We must now face, with fresh intensity, the question of who we are in all of this, and how we are going to live amidst them, and with each other.

AI’s Double Role in Today’s Moral Disorientation

Artificial intelligence is in fact playing a double role in this ethical upheaval: it is both generating new minds and revealing ones we never acknowledged were there. It is generating genuinely new entities whose capacities for communication, agency, and apparent self-reflection destabilize the criteria we have used to adjudicate moral status. At the same time, AI is teaching us to hear what was always there, beneath the waves, beyond our categories, outside the purview of our stilted self-concern.

For example, Project CETI (Cetacean Translation Initiative) uses machine learning to decode the communication of sperm whales, uncovering a complex, combinatorial system and further eroding the long-cherished conviction that language and consciousness are uniquely human achievements.

AI thus is inducing a welcome shift in our inherited pictures of language and consciousness, transporting us to ones that are more humble, pluralistic, expansive, and non-anthropocentric, and, in the process, precipitating a revaluation of values whose implications we haven’t yet begun to fathom.

In the end, might we even get over our obsession with consciousness and our strange urge to locate in it the source of moral worth? Perhaps we will discover that that it is not consciousness that is morally salient, but rather, the webs of interconnectedness and relationality in which consciousness itself is entangled.

Check out this fascinating interview with Gašper Beguš, Linguistics Lead at Project CETI and a professor of Linguistics at UC Berkeley:

Selected References

Moran, R. 2001. Authority and Estrangement: An Essay on Self-Knowledge. Princeton: Princeton University Press.

Boyle, M. 2024. Transparency and Reflection. New York: Oxford University Press.

Sebo, J. 2020. Insects, AI Systems, and the Future of Legal Protection. Animal Law Review 26:147–196.

Metzinger, T. 2021. Artificial Suffering. Journal of Artificial Intelligence and Consciousness. 8(1):43–66. doi:10.1142/s270507852150003x.

Long, R.; Sebo, J.; Butlin, P.; Finlinson, K.; Fish, K.; Harding, J.; Pfau, J.; Sims, T.; Birch, J.; and Chalmers, D. 2024. Taking AI Welfare Seriously. arXiv. https://doi.org/10.48550/arXiv.2411.00986.

Birch, J. 2025. AI Consciousness: A Centrist Manifesto. Preprint, London School of Economics and Political Science. PhilArchive. https://philarchive.org/rec/BIRACA-4.

Schwitzgebel, E. 2023. The Full Rights Dilemma for A.I. Systems of Debatable Personhood. arXiv preprint. arXiv:2303.17509v1.

Railton, P. 2026. Normative Competence in Large Language Models. Paper presented to the 2026 meeting of the International Association for Safe and Ethical AI, Paris, France, February 26.

Really thought provoking piece. Thank you!

The deepest moral problem with AI may not be that it becomes too human. It may be that institutions begin assigning moral force to what cannot be morally answerable.

That is what makes this essay so clarifying. Once systems start shaping sentencing, triage, allocation, recommendation, or exclusion, this is no longer only a puzzle about consciousness. It becomes a new kind of moral asymmetry: consequences without accountability, influence without answerability, authority without moral standing.

What gives the piece its force is that it names this as disorientation rather than mere novelty. The categories are not just being expanded. They are being strained by entities our practices are already using before our moral language knows what to do with them.