Intelligence, Consciousness, and Care

A roundup of recent conversations worth listening to

Given all the progress, hope, hype, and worry connected with AI today, certain long fraught philosophical issues have become matters of public concern and debate. Questions of the nature of intelligence and consciousness are living rent free in everyone’s minds. I’ve been trying to get questions of care the same kind of subsidy.

In this post, I want to mark four moments in the contemporary conversations about intelligence, consciousness, and care that I found illuminating and inspiring, and then close with an update from my work on the Hubert Dreyfus Audio Archive.

I. Anil Seth and the Mythology of Conscious AI

I think everyone should read Anil Seth’s Berggruen Essay Prize–winning piece, “The Mythology of Conscious AI”(you can also listen to it at that link). Seth is one of the most serious and careful voices in the contemporary conversation about intelligence and consciousness, and someone whose work I consistently find worth listening to.

The essay distills themes Seth has been developing for years across talks, interviews, and most fully in his excellent and unusually readable book Being You: A New Science of Consciousness (2021). It opens with a distinction that is far too often blithley blurred: intelligence and consciousness are not the same thing. One can have impressive problem-solving capacities (if we want to take that, for a moment, as a definition of intelligence) without there being anything it is like to be such a problem solving system.

What I find most useful in the essay, though, is Seth’s systematic dismantling of a background assumption that many people in tech scarcely recognize as an assumption at all: that the brain is nothing but a computer, crunching algorithms. In the philosophy of mind this view is called “computational functionalism.”

Computational functionalism holds that consciousness amounts to a certain kind of computation, such that implementing the right information-processing structure is sufficient for consciousness to arise.

I can't tell you how often I find myself in conversations with people in the AI worlds who take this view for granted. It often goes together with an unapologetically disembodied view of the mind; like the mind is just a software program than can run on any sufficiently sophisticated hardware (or “substrate”), meaning eventually you will be able to upload your mind to some mainframe and get rid of your body all together.

I find it strange that this view, born of some kind of belief in its scientific rigor, ends up endorsing a crude Cartesian dualism between mind and body, like you can just carve off the mind or consciousness from the body and get on with things….

Seth is blunt about what hangs on this:

“The very idea of conscious AI rests on the assumption that consciousness is a matter of computation… And if it’s wrong, as I think it may be, then real artificial consciousness is fully off the table, at least for the kinds of AI we’re familiar with.”

Seth then offers four lines of argument that, taken together, put serious pressure on this assumption.

First, brains are not computers. This is not a romantic slogan, but a claim about explanatory mismatch. Brains are living, metabolically active, dynamically self-organizing systems embedded in bodies. Digital computers manipulate symbols in ways that abstract away from precisely those features. Treating brains as if they were basically wet laptops obscures more than it explains.

Second, there are serious alternative theories of consciousness that do not identify it with computation at all. Predictive processing, embodied and enactive approaches, and biological theories of consciousness all point in different directions. Computational functionalism is not the neutral default it is often taken to be.

Third, life matters. Consciousness, as we actually know it, shows up only in embodied living (and, I would add, dying) systems. That does not prove that non-living systems could not be conscious, but it makes the burden of proof much heavier than most AI hype acknowledges.

There’s a recent paper by philosopher Ned Block on this issue: “Can Only Meat Machines Be Conscious?” He talks about the paper here on a recent Sean Carroll’s Mindscape podcast:

Fourth, simulation is not instantiation. A simulation of a hurricane does not get anything wet. A simulation of digestion does not metabolize nutrients. Likewise, a system that simulates the (supposed) causal structure of conscious processes does not thereby become conscious (it could only do so if consciousness is in fact nothing but substrate-independent computation; but we can’t just assume this). This point has been made before, but Seth presses it with welcome clarity.

The essay is very much worth your time. Here is a link to recent talk of Seth’s on YouTube that covers much of the same ground, for those who prefer to hear these arguments worked through live.

II. Alison Gopnik on Care-Giving and Intelligence

Last week I had the good fortune to hear Alison Gopnik speak at UC Berkeley’s Institute of Personality and Social Research. The talk, “Large AI Models as a Cultural and Social Technology,” reprises and extends themes she has been developing for some time, including in other publicly available lectures.

Gopnik’s central move is to shift our attention away from narrow benchmarks of task performance and toward what she calls the “intelligence of care.” Human intelligence, on this picture, is not exhausted by optimization, prediction, or problem-solving. It is deeply entangled with practices of caregiving, teaching, exploration, and scaffolding the development of others. Parents, after all, are not trying to maximize their own reward functions. They are oriented toward futures they themselves will not inhabit.

This emphasis resonates strongly with my own ongoing work on care as an overlooked dimension in AI research, including the talk I posted last week. When I raised the connection between her account of caregiving and the existential-phenomenological notion of care that figures so centrally in early critiques of AI, Gopnik was cautious. She resisted identifying the two too closely, though she readily acknowledged that caregiving is bound up with meaning and with caring more broadly.

I am still thinking through is whether the tension she sees there really holds. She emphasized that parents routinely subordinate their own immediate sense of meaning to the future of their children, implying that this shows that care-giving is a different phenomenon of existential care. But, as I see it, the future of a parent’s child is not external to their own care. It becomes their care. The child’s possibilities are taken up as personally binding and compelling; the parents take the child’s ends as their own. I do not see this as competing with caring “in general,” but as one of its most revealing expressions.

In any case, I was grateful for the exchange and for finally being able to see Professor Gopnik in a live talk after watching so many of her videos on YouTube. Here is a recent video of pretty much the same talk that Professor Gopnik gave in Berkeley last week:

III. Kate Withy on Being-in-the-World and Vulnerability

The third moment of conversation I want to share this week comes from outside the AI conversation proper, but speaks directly to its background assumptions. Katherine Withy, a Heidegger scholar at Georgetown University, recently published essay on Heidegger and vulnerability that offers one of the clearest non-technical articulations of Heidegger’s basic picture of the human condition I have seen in some time. It is called “Heidegger Knew That We Are Always On the Outside, Weathering the Storms” (you can also listen to the essay at that link).

Kate manages to compellingly convey, without laborious “Heideggerese,” why the fantasy of an insulated inner self or mind separate from the world “out there” is not just false but impoverishing.

We are not minds sealed inside containers, occasionally peering out at the world. We are constitutively exposed, entangled, dependent. Our projects give things meaning, but that same meaningfulness makes us vulnerable. Equipment can break. People can fail us. Projects can fail in any number of ways. This vulnerability is not an accident to be engineered away. It is the condition under which anything can matter at all.

The temptation to imagine ourselves as sovereign, invulnerable interiors is understandable. It promises safety. But it also flattens life. A being that cannot be touched by the world has nothing to care about. Withy’s essay makes vivid what is lost when we deny our finitude and dependence: joy, love, and the possibility of being moved.

For anyone thinking about intelligence, artificial or otherwise, this is a critical corrective. Intelligence divorced from vulnerability and world-entanglement is already a distortion. Treating consciousness as something that could simply be uploaded or instantiated in silicon intensifies that distortion.

IV. Eric Kaplan and Tao Ruspoli on a Metaphysical Walk Through Bombay Beach

Another moment I want to share this week comes from a short video Tao Ruspoli filmed during the recent meeting of the American Society for Existential Phenomenology: a morning walk through Bombay Beach with Eric Kaplan. It is a conversation whose rhythms and shifting focus drive right into the crossroads of intelligence, consciousness, and care: how do you protect your mind, your attention, and your sense of reality from beliefs and frames that creep in through social pressure, prestige, and the constant stream of “information,” and that slowly deform what you can notice, what you can trust, and what you can care about.

Kaplan starts from the kind of calm pragmatic provocation he is so good at bringing-forth: he is less interested in believing what is “true” in some abstract sense than in believing in ways that let him flourish, and he worries about ideas that put him in a weak collapsed position where he has to learn about fundamental concerns from the internet, from books, from the ambient consensus, or some other authority (like a philosopher) rather than letting being teach him directly, through contact with trees, dirt, weather, and the particularity of people and place.

Tao and Eric attempt to name what it takes to remain open to meaning in a world that often seems to withhold it. Kaplan keeps insisting that meaning is in fact abundant, whether or not you are in bland Encino or brash Bombay Beach. He says that “letters addressed to you” are everywhere, if you have the eyes to see them. He says that the trap begins when you start worrying that you and the world are not special enough as you already are.

Tao points out, however, that there are places and institutional arrangements that flatten difference and discourage the kinds of conversation and attunement that make a life feel real, and that there are environments that cultivate a healthier soul. Since their talk unfolds over the course of a walk, I recommend listening to it as you walk too. However, then watch again at home so you can take in and absorb the absurd beauty of Bombay Beach as it passes in the background of their video.

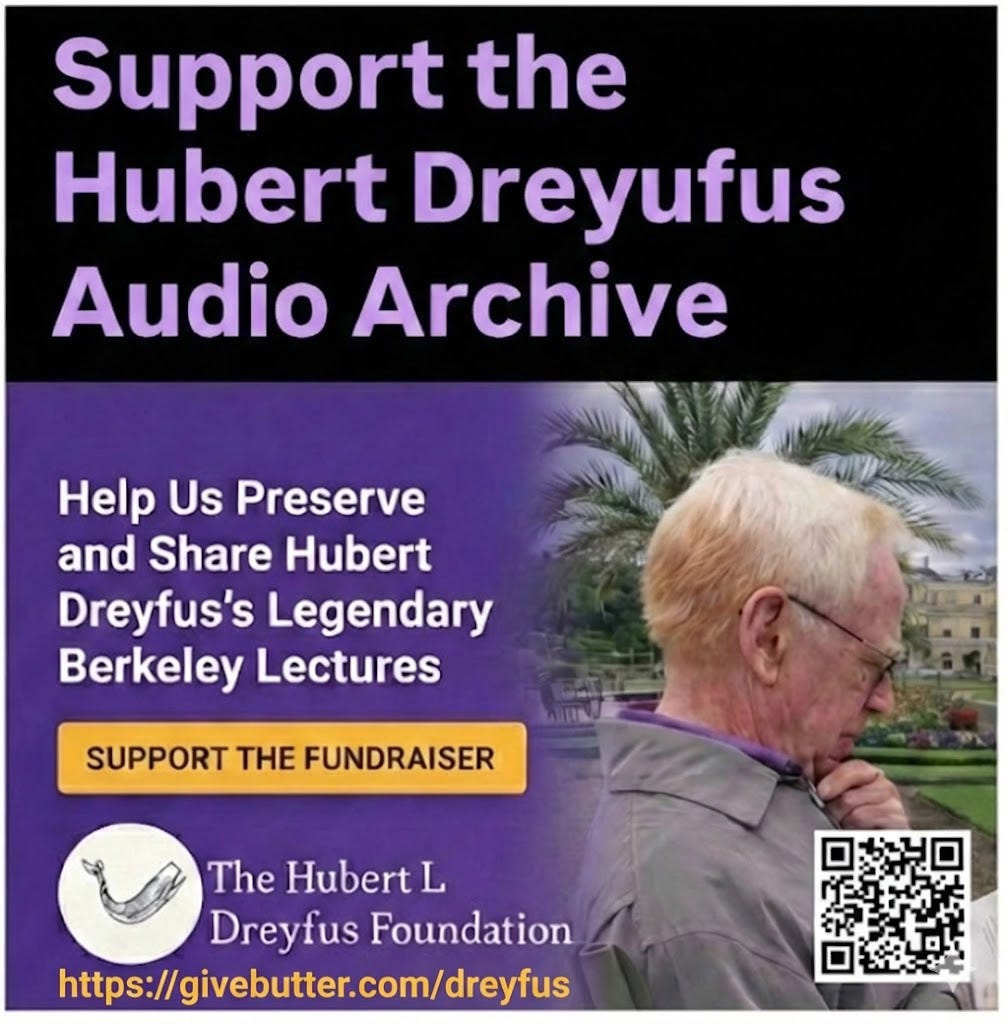

V. An Update from the Hubert Dreyfus Audio Archive

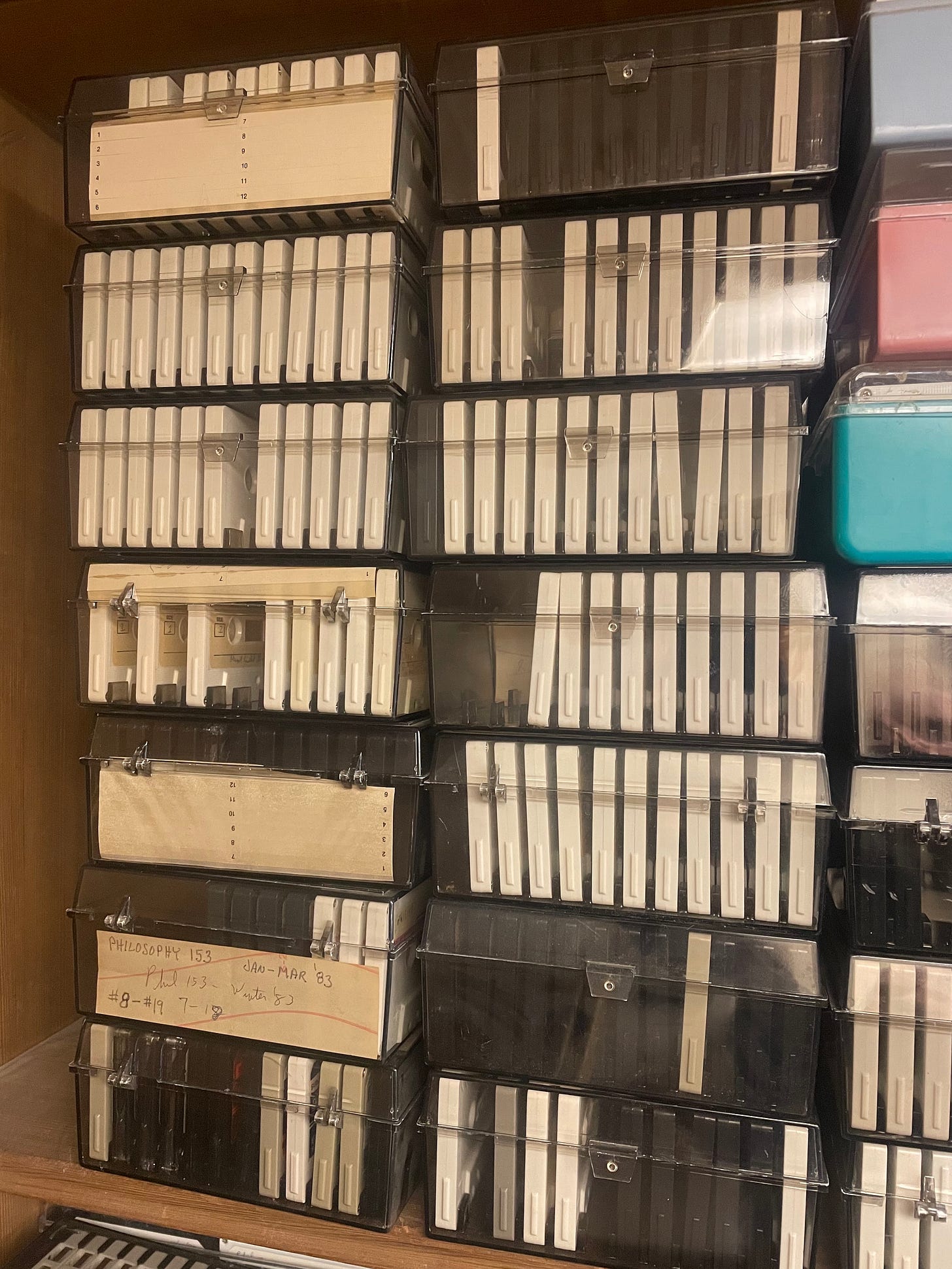

Finally, a concrete update from my own archival work. When I recently stopped by Berkeley’s Philosophy Department to ask permission to post a flyer for the Hubert Dreyfus Audio Archive fundraiser, Bill, the department manager, mentioned that he had been clearing out long-neglected storage cave in the philosophy library.

In the process, he discovered a substantial cache of Hubert Dreyfus’s old cassette recordings, left behind years ago when they were placed on reserve for students. We are not talking about a handful of tapes. There are easily another 300 recordings sitting in the library (probably more). Now, we are looking at a total of at least 500 or 600 cassettes in the archive (we are cataloguing them now).

For me, this was really exciting news. It means that Dreyfus’s recorded lectures and seminars are far more extensive than I had realized. It also underscores the urgency of preserving these recordings properly. These tapes document a way of thinking about intelligence, skill, and care that remains deeply relevant, especially now.

Here is the flyer. Please help me spread the word about this project!

That’s all for now. I’ll be on a digital detox for the next two weeks, not looking at any devices or answering any messages. I will be back online February 9.

I have pre-scheduled some Without Why posts to drop while I’m disconnected, so be on the lookout for them. Thank you!

Please share this link with someone in your world who might appreciate it.

It's becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman's Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with only primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990's and 2000's. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I've encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there's lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar's lab at UC Irvine, possibly. Dr. Edelman's roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461, and here is a video of Jeff Krichmar talking about some of the Darwin automata, https://www.youtube.com/watch?v=J7Uh9phc1Ow