Frictionless Spinning in the Void: Why Humans Are Not LLMs (Video)

AI is not "There" - A talk at the University of Kentucky

Frictionless Spinning in the Void

Earlier this year, I was going around on a small philosophy tour, giving the same talk in different settings. It’s a talk with a slightly unhinged title—“Frictionless Spinning in the Void: AI is not ‘There’ or Why Humans are not LLMs.” I created a video version of the talk where I synchronized the slideshow I prepared for the talk with an audio recording of it. I’m new to this kind of video production and had a lot of fun doing it, even though, since I’m a beginner, the video is a little rough around the edges in places.

The version linked below is from a philosophy conference at the University of Kentucky. It is pitched to a group of philosophers and other Heidegger scholars, but the concerns it raises reach far beyond any academic seminar room. They have to do with how we understand ourselves, how we build worlds, and what kind of human beings we are in the process of designing ourselves to be amidst these weird and wondrous new entities, the LLMs.

Please watch and let me know what you think! Leave a comment on my YouTube page for the video. And please subscribe to my YouTube page, where I’ll be uploading many more videos in coming months.

I provide a textual overview of the talk below.

In the talk, I am reactualizing a lineage that formed me: the early philosophical critiques of AI developed by Hubert Dreyfus and Stuart Dreyfus, Terry Winograd and Fernando Flores, John Haugeland, and others. These thinkers were writing in the era of symbolic AI, or GOFAI, when “intelligence” meant symbol manipulation, rule-following, logical formalisms, and puzzle-solving.

But even though the technical details of AI have changed dramatically, with the neural networks, transformers, LLMs, and diffusion models of today, the deeper philosophical temptations have remained: the temptation to think of human intelligence as reducible to disembodied information processing, the temptation to forget the primacy of our embodied presence in the world, the temptation to imagine that thought and language float free of the moods, shared practices, and commitments that constitute our world and make us who we are.

In some ways, these temptations have intensified. The LLMs are astonishing. We are talking with computers who seem to understand us! and who do things for us! They feel like alien artifacts dropped into history from another timeline. They speak with fluency, creativity, and an eerie sense of responsiveness.

Many people conclude, some breathlessly, some anxiously, that we are on a highway to artificial general intelligence, maybe even superintelligence, that strange fantasy-world in which AI systems recursively design better AI systems until something wholly beyond us emerges and takes over the world and work from us.

I don’t believe that. But I do believe the stakes of this moment are extraordinarily high. I’m struck by how often an apparently technical advance asks us to revisit some older human puzzle: What counts as understanding? What binds us to truth? How do commitments shape our worlds? How do we teach ourselves, and each other, to care?

What concerns me most is not whether LLMs will take over. What concerns me is how easily we begin to think of ourselves as though we were LLMs, substrate-independent information processors running next token prediction algorithm, disengaged observers, floating, formal pattern-recognizers disconnected from care, embodiment, mood, world, and responsibility. What concerns me is the subtle drift of self-understanding that takes place when a technology becomes ubiquitous and we mirror ourselves back to ourselves through its logic.

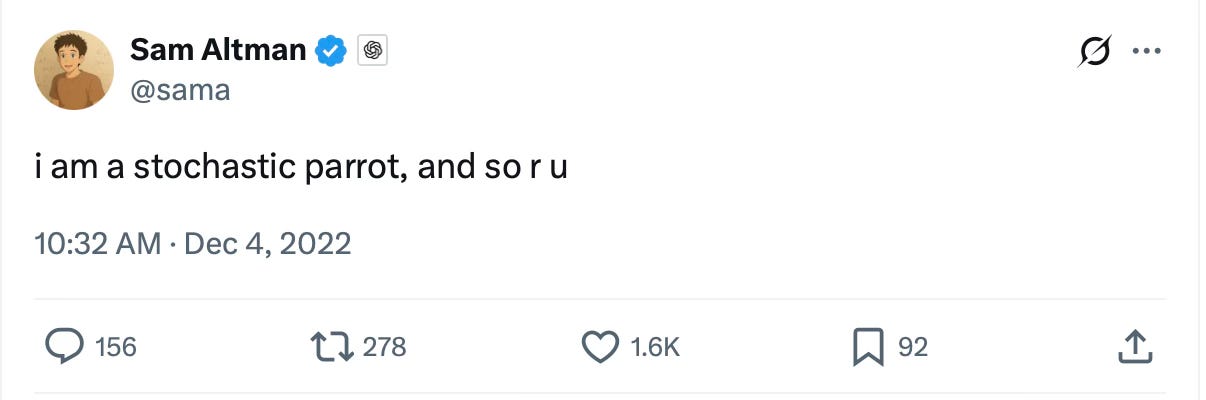

This comment from the OpenAI’s Sam Altman says it all:

Altman is referring to a well-known expression coined by Emily Bender and her co-authors. They begot the phrase “stochastic parrot” as a critique of language models that generate fluent language without understanding, systems that recombine patterns through statistical prediction rather than understanding, mimicking human expression without inhabiting the world that gives words their meaning. (Thanks to Blake Harper for reminding me of the relevance of this tweet from Altman to the argument I am making here).

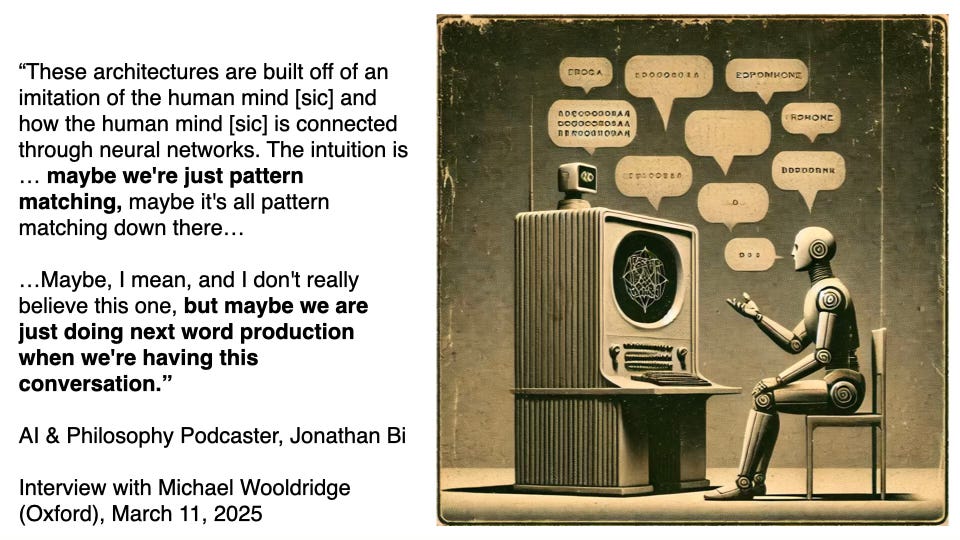

While it is not clear how deeply Altman’s tongue is lodged in his beak in this tweet, he’s clearly aiming to elevate our estimation of ChatGPT’s linguistic skills by suggesting we denigrate our own, as though human language were nothing more than disembodied next-token prediction. Philosophy podcaster Johnathan Bi also exemplifies the temptation:

We yield too much to these devices not when we imagine them as conscious, but when we let them shape our sense of what it means to be human.

A Mood of Wonder—and Responsibility

A through-line of this talk is that we live in a moment of extraordinary historical responsibility. My tone is polemical at times, but my position is not anti-AI. In fact, I experience large language models with real wonder. I genuinely marvel at the possibilities they unlock.

Who knew that next-token prediction could do this much? Who anticipated the emergent abilities that would appear at scale? Who predicted that a system trained only on text would be able to talk intelligently back to us, generate working code, produce poetry, draft contracts, tutor children, and hallucinate like a 19th-century metaphysician? I feel awe. Wonder. And I feel responsibility.

These new entities are entering our conversational spaces. They are participating in our ways of coordinating action. They are merging into the background of our practices, writing emails, drafting policies, generating “slop,” tutoring kids, offering advice, and mediating our attention. We are participating in the creation of new practices, some of which will crystallize into norms and habits that will shape the human way of being for the next epoch.

We cannot outsource that task to profit-driven CEOs, investors, or the next wave of AI hype-men. And we can’t wait for governments to step in and guide us here. We are the ones who must construct the practices through which these tools enter our homes, our classrooms, our communities, and our ways of living.

AI Is Not “There”

The central philosophical claim of the talk is simple to say but takes some work to unpack: LLMs are not there in our world. They have no direct foothold in the meaningful space of worldhood where embodied presence, shared commitments, moods, language, and care interweave.

These current LLM models are mesmerizing linguistic devices, but they are worldless (in Heidegger’s sense of the term that I explain in the talk). They do not inhabit worlds. Things don’t matter to them. They do not have moods. They do not form commitments, sustain projects, or care about the truth of what they say.

They are, to borrow philosopher John McDowell’s phrase and tweak it for our moment, a frictionless spin in the void: dazzling pattern-completers generating coherent continuations of text without any normative constraint from how things actually are.

I don’t mean this as an insult. I am not calling them “merely” anything. Their worldlessness is the philosophical fact. I think it helps illuminate why hallucinations are not a fixable glitch but an inherent feature of systems trained solely on textual correlation. It explains why they can be astonishingly fluent yet indifferent to truth. It explains why they can simulate assertion without making assertions, simulate empathy without feeling anything, simulate deliberation without having a first-person perspective.

To be “in the world” in the Heideggerian sense is to be bound up with care: care as the orientation through which things matter, through which possibilities are disclosed, through which moods open or close our situations, through which commitments bind us to futures and each other.

The Philosophical Lineage Behind the Talk

Part of what I’m doing with this talk is honoring and reactivating a certain philosophical lineage—Dreyfus, Winograd & Flores, Haugeland—who never let us forget that intelligence is not computation. Intelligence is world-involvement. Intelligence is skilled coping, embodied know-how, concern, attunement, and responsiveness to significance.

Heidegger pointed out philosophers often misunderstand being human by treating us as if we were outside agitators to the world, like guests in a strangely unfamiliar kitchen, puzzling over the implements of our lives from a detached vantage point.

But our everyday being-in-the-world is not like that. It is absorbed, skillful, familiar. It is bound up with moods and social norms, and with a background sense of significance that is deeper than any explicit belief.

Fernando Flores and Terry Winograd showed us that language is not merely a conduit for information transfer. Language is the medium of commitments, coordination, shared worlds, promises, requests, declarations. It is how we build futures and bind ourselves to them.

And John Haugeland insisted that caring, or “giving a damn,” is not an optional add-on to intelligence. A being that does not care cannot be a knower. It cannot be bound by truth, by accountability, by the space of reasons and norms in which beliefs can be justified or challenged.

That’s the background of the talk. That’s the tradition I am aiming to revivify through it.

I’ve evolved my views on several points since I first drafted and gave the talk. I try to be in constant conversation with researchers, engineers, neuroscientists, designers, and other thinkers who challenge, enrich, and sometimes overturn my assumptions. I’ve learned more about ongoing attempts to imbue AI with a sense of finitude and care. In fact I participated in a new draft research paper on exactly this theme!

The Real Danger of the LLM Era

If there is a danger in this moment, it is not that LLMs will one day wake up and outgrow us. It’s that they might inadvertently (or advertently, as it were) seduce us to live into a reduced and narrowed picture of ourselves.

The danger is thinking that our intelligence, too, is nothing but symbol manipulation—or that our moods, commitments, and bodies are inconvenient add-ons that can be stripped away in pursuit of some pure informational core or disembodied mind-upload.

The danger is the erosion of our sense of what it means to be answerable to reality—answerable to truth, to each other, to our projects, to our promises.

The danger is mistaking the fluency of LLMs for the kind of care-laden understanding that orients us in the world.

As self-interpreting beings, we become what we practice. We create human nature in our shared activities. And if we practice a style of engagement that mirrors the logic of our tools, efficient, frictionless, disengaged, worldless, we risk hollowing out the very capacities that make human intelligence possible: attention, answerability, wonder, anxiety, commitment, and care.

What the Talk Does

If you watch the talk, you’ll hear me weave together several threads:

A critique of substrate-independent fantasies of mind.

A sketch of how care, mood, and embodied presence constitute intelligence.

A defense of the claim that language is world-disclosive, not merely informational.

A meditation on punk, nihilism, and the search for meaningful distinctions in a technological age.

A warning against reducing human life to frictionless prediction.

A call to cultivate practices that keep us attuned to the truth and to each other.

I imagine this talk as one small contribution to a larger conversation, a conversation about how we live with these new tools, and how we avoid shrinking ourselves to fit their contours.

If you’ve been following my work in Without Why, you know that I keep circling back to the same questions: What are we doing to ourselves with our technologies? What possibilities are we cultivating? Which are we foreclosing? What aspects of being human can we still bring forth, especially as these systems grow ever more intertwined with our way of being?

This talk is one more attempt to think through those questions in public.

An Invitation

I hope you’ll watch the talk. And if the themes resonate with you, I hope you’ll stay in the conversation, leaving a comment here, or on my YouTube page. These questions matter for anyone trying to remain human in a technological age.

As you watch the video, I invite you to bring two moods with you:

Wonder: a genuine appreciation for what these systems can do, for the strangeness of their emergence, for the uncanny power of next-token prediction.

Responsibility: an awareness that we are the ones shaping the practices through which these tools will enter our world, our homes, and our children’s lives.

“Worldlessness” really is the better term. I’ve been thinking of our (human) exchanges with LLMs as “language-based encounters,” and your framing captures that more intuitively. It keeps the awe without pretending there’s any shared experience or interiority.

So, so good. Two minor suggestions to add as you continue this work:

1/ instead of quoting Jonathan Bi, use the “stochastic parrot” tweet from Sam Altman (borrowing Emily Bender’s phrase). That’s far more recognizable. Source: Xhttps://share.google/DQjDDniDB2NxtoK0O

2/ LLMs wouldn’t even meet the coherentists’s epistemic standards because they’re so internally incoherent, often in the same conversation. Certainly spinning in a void, and frictionless, but definitely not fully Davidsonian.

Keep it up, this work isn’t just important for diagnosing the conceptual errors, it’s also important for guarding against the moral problem of sloppy psychologistic reductionism.