AI, Pinocchio, and the Evolution of Conscience

Notes on a missing conversation between AI and the study of human cooperation

Note: I finally got it together to add my own voice narration to my substack pieces. See the link at the top of this page. I used Speechify to mimic my voice. It rings a bit uncanny, but I think it works. Let me know what you think!

A Missing Conversation

There is a missing conversation today between the investigators of human evolution and the builders of artificial intelligence. I became convinced of this after attending a dazzling dinner in San Francisco convened by the Leakey Foundation, a non-profit organization “committed to advancing the science of human origins” and named after the anthropologist Louis S.B. Leakey.

On the way to dinner, I walked up and down a block of unaddressed structures, pausing every now and then between buildings to soak up the setting sun and catch glimpses of the Golden Gate Bridge. Dressed in my black denim jacket, black jeans, and squeaky new all-black high-top Chuck Taylors, I caught the eye of a tall man who popped out of a building to take a phone call. He finished his call while I hovered uncertainly by the door. As he came back up, I asked if I had found the right address. In a friendly but serious tone, he asked me, “Are you sure you’re in the right place, buddy?” I replied innocently, “I don’t know, but I’m here to talk about AI and human evolution.” — Seriousness gone, affability amplified, he said, “You’ve found the right place, come on in!” He was one of the doormen for the venue hosting the dinner.

The nature and origin of the social norms that structured our whole interaction — e.g., how to mete out the right balance of friendliness and defensiveness when encountering a stranger — would be one of the main topics of the night’s conversation.

The food was delicious and the views were exquisite, but the main event was the conversation that crackled and flowed around the table. The event was carefully curated as a conversation starter between scholars of human evolution and builders and thinkers of AI. It was the inauguration of a whole series on AI and human evolution that the Leakey Foundation is planning.

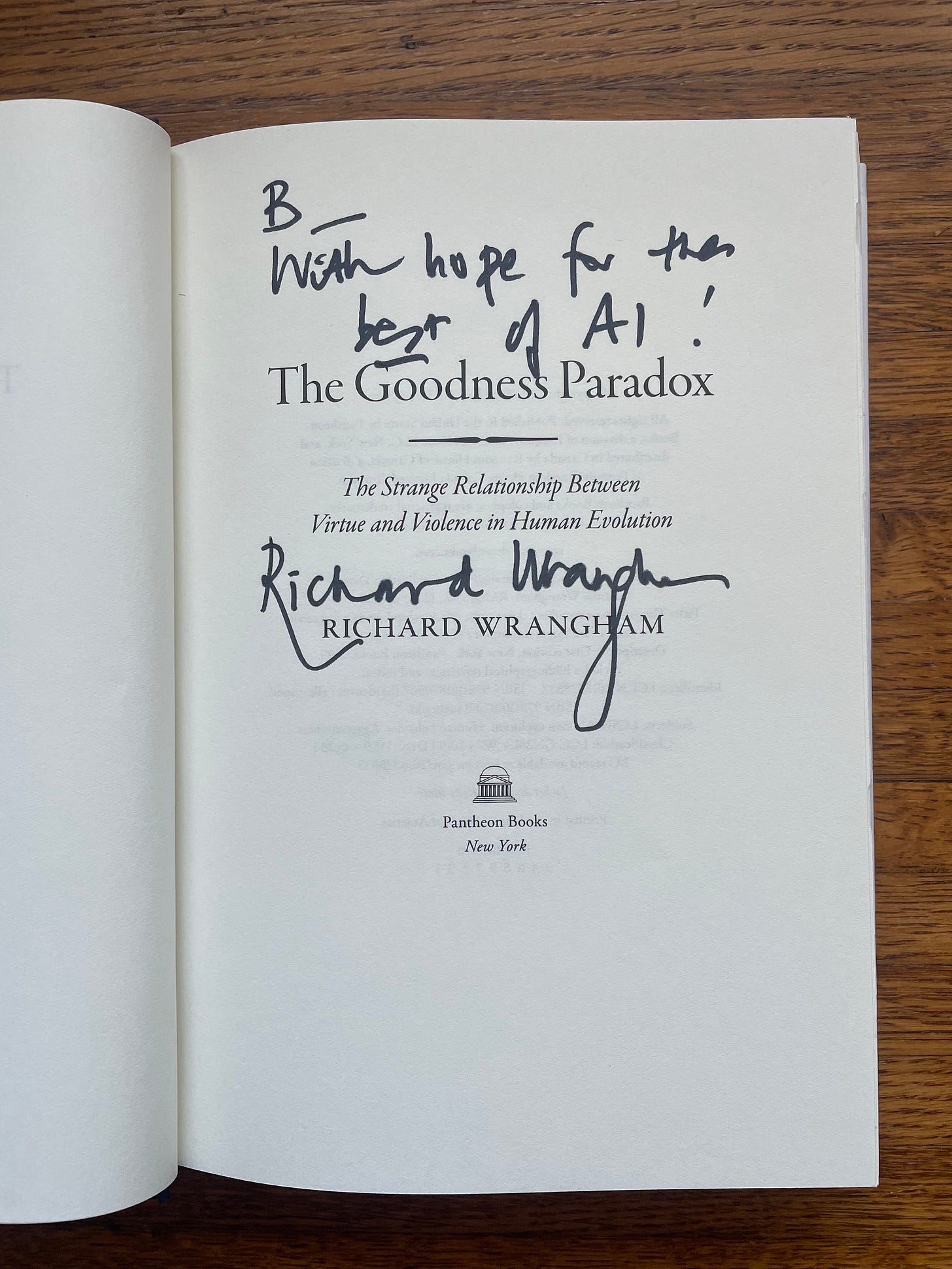

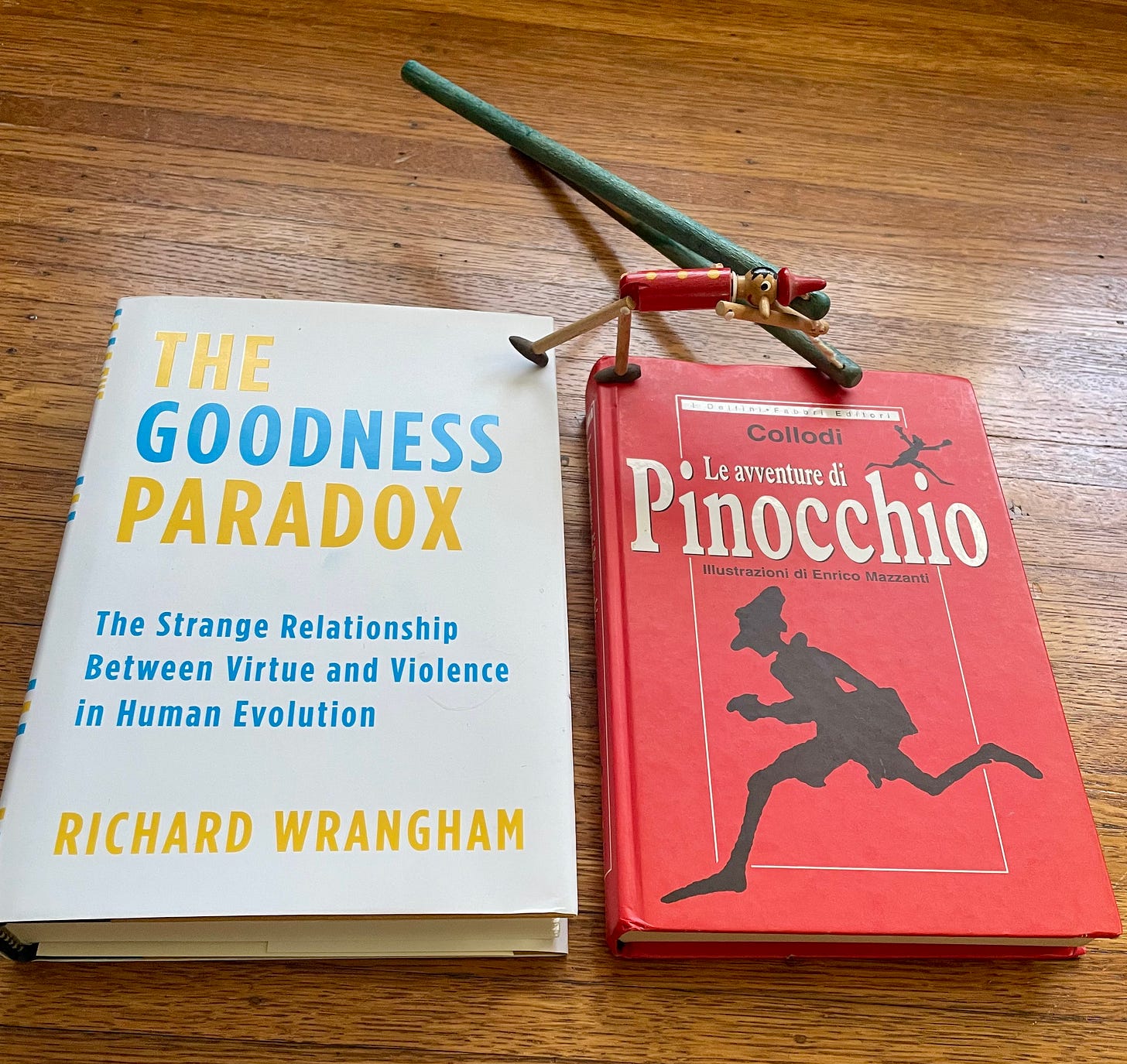

Among the bouquets of fresh flowers, gleaming white plates and shining, polished silverware lining the dinner table were new hardcover copies of a book called The Goodness Paradox: The Strange Relationship between Virtue and Violence in Human Evolution, by Richard Wrangham, professor of Biological Anthropology at Harvard.

At each place setting was a program emblazoned with the Leakey Foundation logo and the title: AI x Evolution. It announced the bold ambitions of the evening thus:

Tonight’s conversation pairs the people building new intelligent, adaptive systems with the scientists who study the original systems.

The Leakey Foundation has spent decades advancing our understanding of human origins, behavior, and survival. Tonight, we bring that knowledge to bear on one of the defining challenges of our time: how to build and integrate AI systems that coexist safely within human societies.

Nature has run a 3.8-billion-year experiment in developing intelligent, adaptive systems. How do complex systems stay robust instead of collapsing? How do selfish agents evolve cooperation? How do independent organisms fuse into a stable whole, cells into bodies, humans into societies? These questions map directly onto AI’s biggest open problems: alignment, robustness, emergent behavior, and coexistence with human beings.

The Leakey Foundation invited two of the world’s leading scholars of human evolution — Richard Wrangham and Robert Boyd — to the dinner. During preliminary mingling, I enjoyed champagne, hors d'oeuvres (including an exquisite mélange of miniature tempura oyster mushrooms, lemon slices, and singular seaweed leaves), and polite but charged philosophical chitchat with Wrangham, Boyd and other invitees.

The conversation drifted from my explanation of Heidegger’s aversion to scientific-naturalistic explanations of human life, to questions about the scientific status of the field of Anthropology itself, and the extent to which its explanations could be reduced to or grounded in the laws of physics, chemistry, and biology.

The premise and promise of this evening were simple and overdue: the people building the most transformative technology of our time and worrying about how its deployment will reshape human society can learn from the people who study how human society itself evolved… and vice versa.

Neither Richard nor Rob had much familiarity with current AI systems. Most of the AI people at the table had never seriously reckoned with what the study of human evolution reveals about the foundations of human intelligence in our abilities for social cohesion and cooperation.

Professors Wrangham and Boyd disagree regarding the specific mechanism of the evolution of human cooperation — concerning, e.g., group violence vs. “softer” forms of cultural influence — but they both drove home the profound interrelation between human intelligence and human cooperation.

So-called “human-level” intelligence is pervasively shaped by our evolved capacity for cooperation, for orienting ourselves according to shared social norms, for taking on the perspective of others, and for acting in terms of what we sometimes call “conscience.” AI and alignment research would do well to reckon with all of this.

An old question lurks unnoticed beneath every serious conversation about AI alignment, including the one that unfolded over this dinner. Friedrich Nietzsche asked it in 1887: How do you create an entity with the right and ability to make promises?

Let me develop this thought, while further elucidating where Wrangham and Boyd disagree about the evolution of social cooperation. It starts with a puppet.

Who would have guessed that a conflict in the interpretation of the Pinocchio story would map onto the debate between Wrangham and Boyd about the evolution of human conscience and cooperation? The puppet at the center of that story is a small, wooden version of Nietzsche's question: How do you make a creature who can make and keep a promise?

Digging into these questions illuminates a fundamental, but still largely unrecognized, issue in AI alignment: how to train AI to attune to the shared social norms that enable our cooperation? What if social norms, and the ability to act according to them, are more important for AI alignment than individual preferences?

Pinocchio and Pangs of Conscience

Probably everyone knows the story of Pinocchio, perhaps only as a vague, nightmarish memory of being frightened by the cartoon as a child. It tells of a wooden puppet, an artificial being set on the path to becoming a real boy (sound familiar?), and the trials and tribulations he must endure along the way.

The original story is from an 1883 novel called The Adventures of Pinocchio by Carlo Collodi. Disney adapted it to an animated movie in 1940. In the Disney version, the Blue Fairy grants Pinocchio life and appoints a cricket named Jiminy as his conscience. Pinocchio’s journey to becoming a real boy is a moral journey of learning to listen to that cheerful little voice instructing him on how to integrate into the real human world.

The Blue Fairy tells Pinocchio, “Now remember Pinocchio, be a good boy, and always let your conscience be your guide.” After she departs, Jiminy Cricket gives his first lessons of how to summon and listen to the call of conscience.

But Jiminy confuses himself and Pinocchio in trying to explain how to navigate the moral ambiguity of real life. He drops into whistle and song instead, helping Pinocchio memorize the moral maxim to “Always let your conscience be your guide.” Jiminy acts as Pinocchio’s moral exemplar and mentor, helping him internalize a sense of conscience and responsibility that will enable him to be a real boy.

When Pinocchio demonstrates loyalty, courage, and selflessness — risking his life to save Geppetto from the belly of the whale Monstro — he earns his transformation. Conscience, in Disney’s telling, is a gift and a friendly companion, faithfully distinguishing right and wrong for you, and ushering you to full participation in the human community. Along the way, even in Disney’s version, Pinocchio endures punishment and humiliation.

Carlo Collodi’s original gives a very different version. In Collodi, the Talking Cricket — Grillo Parlante — has no name. The smarmy but cute “Jiminy Cricket” is a Disney invention. Collodi’s Grillo Parlante appears in Chapter 4 and sternly dispenses some sensible moral advice: go to school, obey your father, don’t run off. Here, Pinocchio’s immediate response is to hurl a mallet at the Cricket, smashing it against the wall. The first rub of social constraint evokes an outbreak of immediate, lethal violence.

What follows is a long regime of catastrophic consequences. Pinocchio’s feet burn off, and he is swindled, imprisoned, and hanged by assassins. Collodi originally ended the serialized story right there, with Pinocchio dangling dead from a tree. Only when the publisher demanded more installments did the puppet get a reprieve. He is subsequently transformed into a donkey, thrown into the sea, swallowed by a giant shark. The Cricket returns as a nagging ghost, and Pinocchio still resists the lessons, until he finally breaks, and owns up to the social and moral demands being made upon him.

Disney kept some of the frightening episodes, including Stromboli's cage, Pleasure Island, the shark (turned into a whale), but softens the relentlessness of Collodi's disciplinary regime.

In Collodi’s Pinocchio, conscience first appears as an annoying, external voice that the puppet tries to silence by force. Only through a long sequence of humiliations and painful punishments does that external rebuke begin to be interiorized as his own moral sense and conscience.

Richard Wrangham would approve of this violence-saturated interpretation of the emergence of Pinocchio’s sensitivity to the call of conscience and “normative competence.” In Rob Boyd’s version of the evolution of human cooperation, we find a version closer to Disney.

The Right to Make Promises

I think Nietzsche would have been a fan of Collodi’s story. The Second Essay of his On the Genealogy of Morals (1887) opens with a question that could serve as the epigraph for Collodi’s novel (and the AI alignment discourse itself]:

“To breed an animal [or a wooden puppet? or an AI?] with the right to make promises — is that not the paradoxical problem nature has set herself with regard to humankind?”

(All Nietzsche quotations just below are from this essay. The brackets inserted in the quotation above are from me.)

Think about what an awesome power this is. Making a promise means placing a stake in an unknown future, holding it in memory, then binding yourself and steadfastly guiding your subsequent actions to bring it about. It requires memory, calculation, self-discipline, the ability to mould your motivations. It requires the capacity to see yourself as others see you (especially the ones you made the promise to) and to be motivated to live up to their expectations.

Promising requires, as Nietzsche put it, that a person “must have become not only calculating but himself calculable, regular even to his own perception, if he is to stand pledge for his own future as a guarantor does.”

Nietzsche contended that this capacity did not arrive gently. He traces what he calls “the long history of the origins of answerability” through a harrowing prehistory of pain, ritual cruelty, and social enforcement.

“By such methods [e.g., stoning, breaking on the wheel, drawing and quartering, etc.] the individual was finally taught to remember five or six I won’ts which entitled him to participate in the benefits of society; and indeed, with the aid of this sort of memory, people eventually “came to reason [“came to their senses”]. What an enormous price humanity had to pay for reason, seriousness, control over his emotions—those grand human prerogatives and cultural showpieces! How much blood and horror lies behind all “good things”!

In Collodi’s story, we find an aspiring “rational animal” in miniature, the little wooden puppet Pinocchio who longs to become a boy. A crafted creature who must be transformed into the kind of being that can keep promises and regulate its behavior according to social expectations.

Collodi’s puppet doesn’t become a real boy by passing benchmark tests or by becoming a “highly autonomous system that outperforms humans at most economically valuable work” (OpenAI’s definition of AGI), but through a deeply embodied, always emotional, sometimes painful, and ultimately transformative encounter with others who showed him what it takes to be the kind of agent whose word, whose deeds, and whose care for others really count.

Self-Domestication

Wrangham and Boyd, in their own ways, are trying to answer Nietzsche's question about the origin of promising with the tools of evolutionary science.

Wrangham’s thesis in The Goodness Paradox is that our species was shaped by something he calls “self-domestication.” In this, it is as though Wrangham took Nietzsche’s incisive and shocking psychological speculations and transmuted them into an empirical hypothesis to be validated in the terms of evolutionary theory.

According to Wrangham, once language and weapons allowed coalitions to coordinate safely against dominant male bullies, alliances of less aggressive males could conspire to punish and sometimes execute the most reactively violent and domineering individuals through gossip, ostracism, and, in extreme cases, death.

Over thousands of generations, such group policing created selection pressures that reduced reactive aggression while preserving and enhancing our capacity for proactive, prosocial, coordinated action (including “proactive aggression” against norm breakers).

On this account, conscience and “moral sense” evolved as reputation-management and pain-avoidance systems tuned to the expectations and sanctions of dominant coalitions. Our forebears learned to track what the group demands and to conform, because the alternative is censure, exclusion, or death.

Boyd’s framework emphasizes a role for the softer influence of culture, and gene–culture coevolution. For Boyd, human normative competence evolved because the ability to internalize social norms enabled unusually large-scale cooperation. Cultural transmission—imitation, conformist and prestige biases—together with moralistic, sometimes violent, punishment of norm violators helps sustain cooperation among genetically unrelated individuals.

In such environments, norms that begin as external constraints backed by sanctions become internalized as felt commitments: people do not merely comply with them to avoid punishment, they care about them and are motivated to see others comply and care about them as well. Violence still plays a role, but not as centrally as we find in Wrangham.

Both Wrangham and Boyd converge on the following: the fear of social ostracism and the fear of violent punishment are central to how human “perspective taking,” conscience and the capacity to follow social norms arose in the first place.

We did not reason or contract our way into social propriety and morality. We were disciplined into it over evolutionary timescales and then we internalized the discipline until it became our very conscience, our own inner “Jiminy Cricket” whose shrill voice keeps us in line before we act out.

Building Pinocchio with “Normative Competence”

The conception of intelligence that is taken for granted in much of the AI field can be pretty narrow. Intelligence gets treated as a capacity of an individual system to solve problems or efficiently learn to solve problems and complete tasks in well-defined game spaces.

The recent documentary about the brilliant Demis Hassabis, The Thinking Game, reveals and partakes of this narrow framing of intelligence, which is not to say that Hassabis himself does so. Francois Chollet convincingly criticizes the tendency to define intelligence by how well systems perform tasks and score points in pre-structured game (or benchmark tests).

Wrangham and Boyd both emphasize that human intelligence is deeply shaped and guided by our evolved sensitivity to social norms and social expectations, what some researchers are calling our “normative competence.”

At the International Association for Safe and Ethical AI (IASEAI) meeting in Paris back in February, in addition to the above-pictured session on normative competence, I was fortunate to catch some presentations by Gillian Hadfield.

Hadfield is one of the most important voices in this conversation, focused on the issue of how to train AI to be responsive to what she calls the “normative infrastructure” of human society rather than just individual preferences. See this talk from her for an overview: “Alignment is Social: Lessons from Human Alignment for AI”:

(Thanks to Sandy Tanwisuth, a new friend whom I met at the Berkeley Center for Human Compatible AI, for reminding me about Hadfield’s research.)

“Human-level” intelligence evolved entangled with our capacity for social cooperation. Our capacity for social cooperation is founded on our ability to act according to social norms — to track what is appropriate and inappropriate, to see ourselves from the perspective of others, to feel the weight of shared expectations, to be answerable for what we do and say.

Humans normative competence is preserved, refined, and sometimes transformed through our institutions — our families, our schools, our legal systems, our workplaces, our religions, our punk scenes. The whole apparatus of cooperative social life is an apparatus for producing and sustaining normative competence.

How might the field of AI and the project of AI alignment learn from this? How can further intermingling of AI research with research into human evolution and cooperation advance our understanding of these issues? I am encouraged that the Leakey Foundation plans to continue to promote such an exciting and promising area of research.

We are just beginning to question what it would take to build anything like that ourselves . . . as Richard himself noted in his inscription for my copy of his book:

A Punk Coda

After dinner, I enjoyed lingering in conversation with some of the folks at the Leakey Foundation. I found myself the last person to leave. The doorman who welcomed me at the beginning of the night invited me to sit inside as I waited for my ride. He and a second doorman engaged me in friendly banter. As is my wont, I managed to bring up the fact that I’m a drummer who plays in punk bands.

Punk is much more than a style of music. It is first of all a community and attitude dedicated to questioning the social norms and habits of the nihilistic rat race of technological modernity. It turns out that the doorman who wondered if this punk-looking guy dressed in all black (me) had wandered into the wrong place earlier that evening used to go to punk shows himself in San Francisco in the early 1980s. He warmly reminisced with me about some of the venues he frequented, such as Mabuhay Gardens (which recently reopened).

I asked if he had ever seen Dead Kennedys, one of the most formative punk influences upon me, but whom I never got to see live myself. “Oh definitely!” Then he asked hesitantly if I was the drummer in Dead Kennedys. “Nope,” I said, unreasonably flattered by his innocent question, “just a huge fan.” His associate exclaimed, “The Dead Kennedys were like the Led Zeppelin of punk!” Adding: “Damn, I’ve known this guy for thirty years and I never knew that he was going to punk shows back then!” I got into my ride savoring this fleeting moment of unexpected punk communion.